For a more recent comment on MLB scheduling and the Stephensons see my response to the 30 for 30 video.

The relationship between operations research and sports is one topic that I return to often on this site. This is not surprising: I am co-owner of a small sports scheduling company that provides schedules to Major League Baseball and their umpires, to many college-level conferences, and even to my local kids soccer league. Sports has also been a big part of my research career. Checking my vita, I see that about 30% of my journal or book chapter papers are on sports or games, and almost 50% of my competitive conference publications are in those fields. Twenty years ago, my advisor, Don Ratliff, when looking over my somewhat eclectic vita at the time (everything from polymatroidal flows to voting systems to optimization implementation) told me that while it was great to work in lots of fields, it is important to be known for something. To the extent that I am known for something at this point, it is either for online stuff like this blog and or-exchange, or for my part in the great increase of operations research in sports, and sports scheduling in particular.

This started, as most things in life often do, by accident. I was talking to one of my MBA students after class (I was younger then, and childless, so I generally took my class out for drinks a couple times a semester after class) and it turned out he worked for the Pittsburgh Pirates (the local baseball team). We started discussing how the baseball schedule was created, and I mentioned that I thought the operations research techniques I was teaching (like integer programming) might be useful in creating the schedule. Next thing I know, I get a call from Doug Bureman, who had recently worked for the Pirates and was embarking on a consulting career. Doug knew a lot about what Major League Baseball might look for in a schedule, and thought we would make a good team in putting together a schedule. That was in 1996. It took until 2005 for MLB to accept one of schedules for play. Why the wait? It turned out that the incumbent schedulers, Henry and Holly Stephenson were very good at what they did. And, at the time, the understanding of how to create good schedules didn’t go much beyond work on on to minimize breaks (consecutive home games or away games) in schedules, work done by de Werra and a few others. Over the decade from 1996-2005, we learned things about what does work and what doesn’t work in sports scheduling, so we got better on the algorithmic side. But even more important was the vast increase in speed in solving linear and integer programs. Between improvements in codes like CPLEX and increases in the speed of computers, my models were solving millions of times faster in 2005 than they did in 1996. So finally we were able to create very good schedules quickly and predictably.

In those intervening years, I didn’t spend all of my time on Major League Baseball of course. I hooked up with George Nemhauser, and we scheduled Atlantic Coast Conference basketball for years. George and I co-advised a great doctoral student, Kelly Easton, who worked with us after graduation and began doing more and more scheduling, particularly after we combined the baseball activities (with Doug) and the college stuff (with George).

After fifteen years, I still find the area of sports scheduling fascinating. Patricia Randall, in a recent blog post (part of the INFORMS Monthly Blog Challenge, as is this post) addressed the question on why sports is such a popular area of application. She points to the way many of us know at least something about sports:

I think the answer lies in the accessibility of the data and results of a sports application of OR. Often only a handful of people know enough about an OR problem to be able to fully understand the problem’s data and judge the quality of potential solutions. For instance, in an airline’s crew scheduling problem, few people may be able to look at a sequence of flights and immediately realize the sequence won’t work because it exceeds the crew’s available duty hours or the plane’s fuel capacity. The group of people who do have this expertise are probably heavily involved in the airline industry. It’s unlikely that an outsider could come in and immediately understand the intricacies of the problem and its solution.

But many people, of all ages and occupations, are sports fans. They are familiar with the rules of various sports, the teams that comprise a professional league, and the major players or superstars. This working knowledge of sports makes it easier to understand the data that would go into an optimization model as well as analyze the solutions it produces.

I agree that this is a big reason for popularity. When I give a sports scheduling talk, I know I can simply put up the schedule of the local team, and the audience will be immediately engaged and interested in how it was put together. In fact, the hard part is to get people to stop talking about the schedule so I can get on talking about Benders’ approaches or large scale local search or whatever is the real content of my talk.

But let me add to Patricia’s comments: there are lots of reasons why sports is so popular in OR (or at least for me).

First, we shouldn’t ignore the fact that sports is big business. Forbes puts the value of the teams of Major League Baseball to be over $15 billion, with the Yankees alone worth $1.7 billion. With values like that, it is not surprising that there is interest in using data to make better decisions. Lots of sports leagues around the world also have high economic effects, making the overall sports economy a significant part of the overall economy.

Second, there are a tremendous number of issues in sports, making it applicable and of interest to a wide variety of researchers. I do essentially all my work in scheduling, but there are lots of other areas of research. If you check out the MIT Sports Analytics conference, you can see the range of topics covered. By covering statistics, optimization, marketing, economics, competition and lots of other areas, sports can attract interest from a variety of perspectives, making it richer and more interesting.

A third reason that sports has a strong appeal, at least in my subarea of scheduling, is the close match between what can be solved and what needs to be solved. For some problems, we can solve far larger problems than would routinely occur in practice. An example of this might be the Traveling Salesman Problem. Are there real instances of the TSP that people want to solve to optimality that cannot be solved by Concorde? We have advanced so far in solving the problem, that the vast majority of practical applications are now handled. Conversely, there are problems where our ability to solve problems is dwarfed by the size of problem that occurs in practice. We would like to understand, say, optimal poker play for Texas Hold’em (a game where each player works with seven cards, five of them in common with other players). Current research is on Rhode Island holdem, where there are three cards and strong limitations on betting strategy. We are a long way from optimal poker play.

Sports scheduling is right in the middle. A decade ago, my coauthors and I created a problem called the Traveling Tournament Problem. This problem abstracts out the key issues of baseball scheduling but provides instances of any size. The current state of the art can solve the 10 team instances to optimality, but cannot solve the 12 team instances. There are lots of sports scheduling problems where 10-20 teams is challenging. Many real sports leagues are, of course, also in the 10-20 team range. This confluence of theoretical challenge and practical interest clearly adds to the research enthusiasm in the area.

Finally, there is an immediacy and directness of sports scheduling that makes it personally rewarding. In much of what I do, waiting is a big aspect: I need to wait a year or two for a paper to be accepted, or for a research agenda to come to fruition. It is gratifying to see people play sports, whether it is my son in his kid’s soccer game, or Derek Jeter in Yankee Stadium, and know not only are they there because programs on my computer told them to be, but that the time from scheduling to play is measured in months or weeks.

This entry is part of the March INFORMS Blog Challenge on Operations Research and Sports.

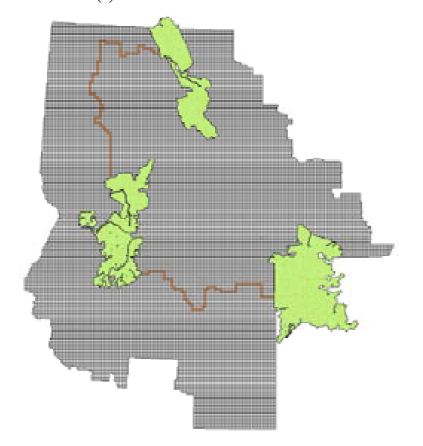

My colleague, Willem van Hoeve, had worked on variants of this problem and had some nice data for the students to work with. The models were interesting in their own right, with the “contiguity” constraints causing the most challenge to the students. The results of the project were corridors that were much cheaper (by a factor of 10) than the estimates of the cost necessary to support the wildlife. The students did a great job (as Tepper MBA students generally do!) using AIMMS to model and find solutions (are there other MBA students who come out knowing AIMMS? Not many I would bet!). But I was left with a big worry. The goal here was to find corridors linking “safe” regions for the grizzlies. But what keeps the grizzlies in the corridors? If you check out the diagram (not from the student project but from a research paper by van Hoeve and his coauthors), you will see the safe areas in green, connected by thin brown lines representing the corridors. It does seem that any self-respecting grizzly would say: “Hmmm…. I could walk 300 miles along this trail they have made for me, or go cross country and save a few miles.” The fact that the cross country trip goes straight through, say, Bozeman Montana, would be unfortunate for the grizzly (and perhaps the Bozemanians). But perhaps the corridors could be made appealing enough for the grizzlies to keep them off the interstates.

My colleague, Willem van Hoeve, had worked on variants of this problem and had some nice data for the students to work with. The models were interesting in their own right, with the “contiguity” constraints causing the most challenge to the students. The results of the project were corridors that were much cheaper (by a factor of 10) than the estimates of the cost necessary to support the wildlife. The students did a great job (as Tepper MBA students generally do!) using AIMMS to model and find solutions (are there other MBA students who come out knowing AIMMS? Not many I would bet!). But I was left with a big worry. The goal here was to find corridors linking “safe” regions for the grizzlies. But what keeps the grizzlies in the corridors? If you check out the diagram (not from the student project but from a research paper by van Hoeve and his coauthors), you will see the safe areas in green, connected by thin brown lines representing the corridors. It does seem that any self-respecting grizzly would say: “Hmmm…. I could walk 300 miles along this trail they have made for me, or go cross country and save a few miles.” The fact that the cross country trip goes straight through, say, Bozeman Montana, would be unfortunate for the grizzly (and perhaps the Bozemanians). But perhaps the corridors could be made appealing enough for the grizzlies to keep them off the interstates. I was made for this project! First, I have the experience of watching my students work on grizzly ecosystems (hey, I am a professor: seeing a student do something is practically as good as doing it myself). Second, and more importantly, I have extensive experience in bamboo, which is, of course, the main food of the panda. My wife and I planted a “non-creeping” bamboo plant three years ago, and I have spent two years trying to exterminate it from our backyard without resorting to napalm. I was deep into negotiations to import a panda into Pittsburgh to eat the cursed plant before I finally seemed to gain the upper hand on the bamboo. But I fully expect the plant to reappear every time we go away for a weekend.

I was made for this project! First, I have the experience of watching my students work on grizzly ecosystems (hey, I am a professor: seeing a student do something is practically as good as doing it myself). Second, and more importantly, I have extensive experience in bamboo, which is, of course, the main food of the panda. My wife and I planted a “non-creeping” bamboo plant three years ago, and I have spent two years trying to exterminate it from our backyard without resorting to napalm. I was deep into negotiations to import a panda into Pittsburgh to eat the cursed plant before I finally seemed to gain the upper hand on the bamboo. But I fully expect the plant to reappear every time we go away for a weekend.